The 5 Common Technical SEO Issues to Watch in 2023

B2B buyers spend more time independently researching solutions online than any other form of buying activity,1 and 68% of online experiences begin with a search engine.2 Your visibility on Google is critical to sales and marketing success in 2022.

Your content strategy, site structure, on-page SEO, backlinks and more, impact Google ranking. However, technical SEO is the underpinning factor you need to get right if you want those other strategies to succeed.

What is technical SEO?

To ensure that we’re all on the same page, “technical SEO” covers elements of your website that directly govern how Google (or other search engines) engage with your pages. Specifically, how search engines crawl and index your content. For example, page speed, site structure, heading structure, redirects, alt-text, all influence your technical SEO.

Technical SEO stands in contrast to “on-page” and “off-page” SEO, which cover the actual content on your website, and how other websites engage with your website (for example, backlinks).

Why take technical SEO seriously?

Good technical SEO ensures that the other efforts you put into organic visibility actually pay off. Particularly in the context of the Page Experience Update based on Google’s Core Web Vitals metrics, getting the technical elements of SEO right has never been more important for SEO experts in the UK.3 Fundamentally, you can write the best content in the world and target it at a great keyword, but if the page takes ten minutes to load and is full of dead links, it won’t rank. As just one example, bounce rate increases by 90% if page load time increases from one second to five.4 There is a reason that the best SEO agencies take technical SEO seriously.

What’s in this blog?

As a B2B digital marketing agency, with expertise in long-form content and website design, organic visibility feeds into everything we do at Gripped. Our technical SEO skill and expertise is part of the reason why our customer’s see real, tangible results. Here, we’ve put together a list of the top five most common technical SEO issues we see and how we fix these challenges for our customers.

1. Site speed issues

Page speed directly impacts ranking. This has been known since the Google Algorithm Speed update in 2021,5 and that importance has only increased with the Core Web Vitals metrics Google now uses to prioritise ranking. Making sure that your page speed is up to scratch might be the most important thing to focus on from a technical SEO perspective in 2022.

How to fix this issue: The first challenge when it comes to page speed is quantifying the issue and identifying the right fix. In doing so, you should focus on four core metrics:

- First Contentful Paint (FCP): The first point at which a user can see anything on the screen. This should take less than 2 seconds.

- Largest Contentful Paint (LCP): The time it takes for the majority of a page’s content to be loaded. This should take no more than 2.5 seconds.

- First Input Delay (FDI): Measures the time it takes from when a user first interacts with a page to when the browser actually begins processing the request. This should be less than 100 milliseconds.

- Cumulative Layout Shift (CLS): This isn’t specifically page speed, but is a core metric Google associates with page speed, and quantifies how often users experience unexpected layout shifts when navigating a page. You should aim for a CLS score of 0.1 or less.

Code minification, proper image compression, and next-gen video and image formats are all important techniques for optimising page speed. However, you also need to think strategically about complex and visual elements on your website. Although video backgrounds, large images, and title spinners are all engaging design elements that can improve user experience, they do negatively impact page speed. So, only include them if they are delivering a specific purpose.

Lastly, how you build your website can have an impact on the options at your disposal to optimise outcomes. For example, we prefer WordPress because of the customisation it provides, without making site changes too complex or technical.

We use PageSpeed Insights or Google Search Console to monitor page speed. A good web developer can help you master page speed, and site speed should be a major focus within your SEO audits.

Are you looking for a new way to tackle SEO?

In this eBook we’ll point out all of the common myths and assumptions about how SEO works and debunk them for you.

Download it for free here and start improving your SEO strategy today!

2. Website security

Security issues can severely impact your website’s SEO. Up to 82% of users will leave a webpage if it’s not secure6 — and this can lead to a considerable drop in your SERP (search engine results page) ranking.

For Google, website security is a top priority. Combined with the fact that secure websites naturally rank higher, removing security issues should be a top priority. Look out for:

Missing SSL certificates

SSL or Secure Sockets Layer is a security protocol that ensures your website’s connection is encrypted and secure. Sites with no SSL certificate are considered unfit for sharing sensitive information. If your domain starts with HTTP and not HTTPS, your SSL certificate is missing. This reduces the credibility of your site to search engines and users.

How to fix this issue: You can buy an SSL certificate from your hosting provider or certificate authorities for a minimum charge. The certificate needs to be renewed periodically. If you miss the renewal, the connection will no longer be secure.

Mixed content

Mixed content errors are most common right after migrating from HTTP to HTTPS. This issue arises when HTTP links are included in your CSS and JS files or elements on the page loaded via HTTP instead of HTTPS. This can undermine your SSL certification, affecting website security and SERP ranking.

How to fix this issue: To avoid mixed content errors, always use only HTTPS URLs when embedding elements on your web pages. Also, make sure there are no embedded HTTP links in your website’s theme or installed plugins. Replace all HTTP links with the secured HTTPS version to resolve the issue.

3. Crawlability issues

Search engines use tiny applications known as web crawlers to understand and index web pages. The more manageable the page is to crawl, the better optimised it is for search engines. Essentially, these web crawlers help search engines (like Google) decide whether your web page will rank or even have the chance to rank. So, if your site is not easily crawlable, it will miss out on the opportunity to rank, damaging your ability to use this critical inbound marketing channel.

Search engines also partially use crawlability to measure the user-friendliness of a site. A site that is not easy to crawl is considered confusing for the visitor. Search engines give low preference to such pages while ranking. Common crawlability issues include:

404 errors

404 errors indicate that the browser was able to communicate with the server, but the server could not find the requested page. Links to 404 pages confuse the web crawlers and make crawling that page more expensive for search engines. This negatively impacts your “crawl budget”, e.g. the amount of time and resources that Google devotes to crawling your site. They also negatively impact user experience. Both factors lead you to be deprioritised by search engines.

How to fix this issue: Remove all links leading to error pages or replace the links with another resource. Avoid mistyping page URLs. Misspelt URLs may also lead to 404 errors. Tools such as SEMrush can be used to locate 404 errors on a website.

Missing alt text

Alternative text’s (alt-text or alt tags) primary purpose is to improve the accessibility of your website by providing a description of the images displayed. Alt text also makes it possible for web crawlers to understand your images. Both factors positively impact SEO.

How to fix this issue: Every time you load an image on your website, provide a proper alt description — it’s that simple.

Too many links on one page

Internal and external links are good for SEO. But including too many links on a single page can negatively impact crawlability. Crawling bloated pages is complex and might waste the crawling budget. This can lead to other pages on your website not being crawled.

Including too many internal links on the same page dilutes their value to the search engines. Link stuffing, or including several links to high ranking websites that are not relevant to your page, impacts your website’s SEO negatively.

How to fix this issue: Don’t add more links (internal or external) than necessary. The key is to look for places where links can be naturally added. If it feels forced, it’s probably wrong. Stay clear of link stuffing and you should be all good.

4. Indexability issues

Indexability simply refers to the ability of search engines to analyse and add a web page to its index. For a page to be indexed, it needs to be found by the web crawlers. So, indexability is directly related to crawlability. However, even if Google can crawl a site, it might not be able to index all the pages due to indexability issues. Common issues we see include:

Duplicate content

Having duplicate content (or multiple versions of the same content) on your website may significantly impact SEO performance. Google will typically index only one of the duplicate pages, filtering out all other instances. The page it selects may not be the one you want to rank. This also can incorrectly distribute ranking priorities, meaning that none of those pages will rank.

How to fix this issue: Try not to use duplicate content on web pages. If there’s a need to create duplicate content, use a canonical tag or non-index tag for the pages you don’t want to be indexed. To do this you can use SEO plugins such as YoastSEO.

Disallowed internal resources

Webpage resources blocked by a “Disallow” instruction in your robots.txt file are not crawled by search engines. This can be useful if you don’t want the page crawled. But it will lead to poor search performance if applied incorrectly.

How to fix this issue: You can find details about disallowed internal resources in your robots.txt files. Update this file to unblock a resource.

Orphaned pages in the sitemap

A webpage without any internal links pointing to it is called an “orphan page”. Search engines usually discover a new page when crawlers reach it following a link from another page on your website, or the crawler finds the URL of the page in the XML sitemap. The total absence of internal links on a page means the search engine depends only on the XML sitemap for indexing it. This considerably reduces the chance of the page being indexed.

Also, users won’t be able to reach these pages directly from your site, leading to a frustrating user experience. So, Google doesn’t appreciate placing orphaned pages on your website.

How to fix this issue: Review all orphaned pages in your sitemap.xml files and do the following:

- If a page is no longer needed, unpublish it.

- If a page is valuable, link it with another page on your website.

- If a page serves a specific purpose and requires no internal linking, leave it as it is.

5. Site architecture issues

Site architecture issues can confuse search engines, impacting your site’s SERP ranking. We identify and fix most of these problems with the help of Google Search Console or SEMrush. Common site architecture issues include:

Broken internal and external links

Broken links on your website affect the user experience negatively and can worsen your site’s ranking. It also gives the crawlers the idea that your website is poorly maintained.

How to fix this issue: If a target web page returns an error, remove the link leading to the error page or replace it with another resource. Periodically check if all the URLs to the internal links are accessible.

Duplicate title tags

Duplicate title tags on multiple pages make it difficult for search engines to determine which page is relevant to a search query. As a result, the page might be left out entirely.

How to fix this issue: Provide unique and concise titles for each page. Include your most important keywords in the title tag.

Missing H1 tags

H1 tags help define the page’s topic for the search engines. Missing or empty H1 titles may lead to lower or not ranking on search result pages.

How to fix this issue: Provide a concise and relevant H1 tag for every page.

Problems with redirect chains and loops

Long redirect chains and infinite loops can damage your SEO efforts. These make crawling difficult and also reduce site speed.

How to fix this issue: Do not use more than three redirects in a chain and redirect each URL in the chain to your final destination.

Specialist support helps sidestep technical challenges

Staying on top of technical SEO requires ongoing monitoring, regular SEO audits, and expert knowledge about the platforms you use to build and host your website. This article will point you in the right direction, but knowing the answers doesn’t guarantee that the right actions are taken. What’s more, there are other issues to think about, for example, making sure that your website is device responsive and mobile friendly.

At Gripped, we help our clients minimise technical B2B SEO challenges and maximise marketing outcomes. Our monthly Site Health Audit identifies and resolves B2B SEO issues to fine-tune your site’s SEO performance. Then, we can put in place the lead generation, qualification and nurture campaigns needed to grow your business. Get in touch and Book Your Free Growth Audit and see how Gripped can improve your SEO performance and unlock your business’s growth potential today.

1The New B2B Buying Process | Gartner

263 SEO Statistics

3Google Page Experience Update – Desktop Rollout Complete

4New Industry Benchmarks for Mobile Page Speed – Think With Google

5Page Speed and Google June-July 2021 Core Algo Updates

6SSL Now Included for All Marketing Customers – HubSpot Community

Reach Your Revenue Goals. Grow MRR with Gripped.

Discover how Gripped can help drive more trial sign-ups, secure quality demos with decision makers and maximise your marketing budget.

Here's what you'll get:

- Helpful advice and guidance

- No sales pitches or nonsense

- No obligations or commitments

Book your free digital marketing review

Other Articles you maybe interested in

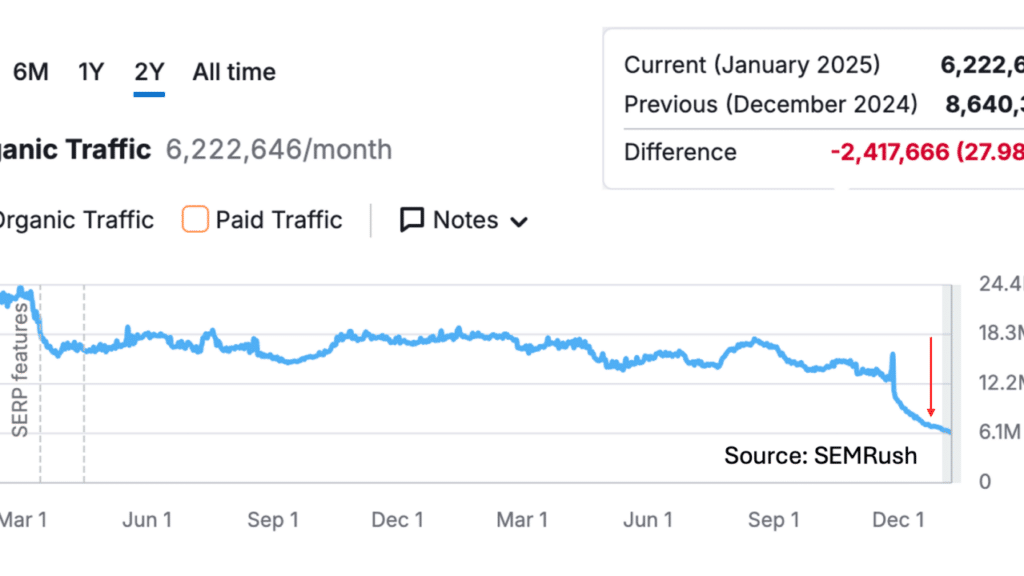

Countering Declining Organic Search Traffic: A Playbook for SaaS CMOs

Organic search has long been a cornerstone of SaaS growth, driving a steady stream of high intent visitors and sales conversations. But many SaaS CMOs are now witnessing an unsettling trend: declining organic traffic despite continued SEO effort. In late 2024, analysis of 1,600 SaaS companies revealed steep losses in organic search traffic for many…

Rank First, Write Second: A Practical SEO Strategy for B2B SaaS Marketers

Search engine optimisation (SEO) can feel like a slow burn—especially for B2B SaaS marketers who must balance lead generation, product marketing, and customer retention. In a crowded digital landscape where tens of thousands of SaaS companies are battling for search engine real estate, achieving top rankings is more critical than ever. But how do you…

The Best B2B SEO Agencies in 2025

Every modern business appreciates the value of Search Engine Optimisation (SEO) and will be eager to fire their websites up the Search Engine Results Pages (SERPs). However, marketing your products and services to B2B clients serves up a range of unique challenges compared to B2C. So, when seeking professional help, finding the best dedicated B2B…